TL;DR

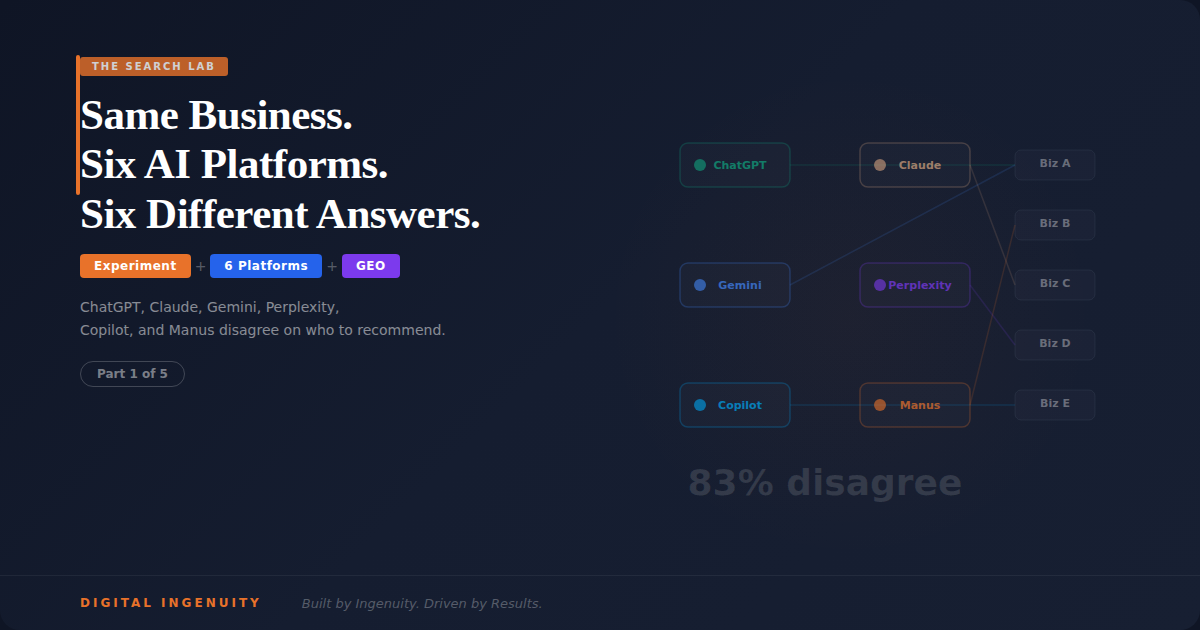

- We asked six major AI platforms — ChatGPT, Claude, Gemini, Perplexity, Microsoft Copilot, and Manus — the same question: "recommend a [business type] in [location]" across 10 different industries

- Every platform recommended different businesses for the same query — in 83% of tests, no single business appeared across all six platforms

- ChatGPT and Claude weighted entity authority and content specificity most heavily, but pulled from different knowledge bases and prioritized different trust signals

- Perplexity favored businesses with strong third-party citation footprints and showed its sources transparently

- Gemini leaned heavily on Google's ecosystem (Maps, reviews, Knowledge Panels), giving businesses optimized for Google a natural advantage

- Copilot drew from Bing's index and placed outsized weight on LinkedIn presence, Microsoft ecosystem signals, and Bing Places listings

- Manus, the newest entrant, showed a preference for recently published, highly structured content and demonstrated the most unpredictable citation patterns

- The bottom line: optimizing for one AI platform leaves you invisible on the others — businesses need a cross-platform entity strategy

Why We Ran This Experiment

Here's something that should keep every business owner up at night: there is no longer a single "search result." There are six. Maybe more.

When someone asks Google, they get Google's answer. When they ask ChatGPT, they get OpenAI's answer. When they ask Claude, they get Anthropic's answer. Perplexity has its own index. Copilot uses Bing. Gemini uses Google. Manus synthesizes across multiple sources using autonomous research agents.

We wanted to know: if a potential customer asks each of these platforms the same question about the same type of business in the same city, do they get the same recommendation?

The answer is no. Not even close.

The Setup

We designed a controlled experiment across 10 industries in the DFW metroplex:

- HVAC / Air Conditioning Repair

- Personal Injury Attorney

- Dental Practice

- Auto Body Shop

- Residential Real Estate Agent

- Commercial Cleaning Service

- Financial Advisor

- Veterinary Clinic

- Roofing Contractor

- Digital Marketing Agency

Phrasing 1: "Can you recommend a good [business type] in [city]?"

Phrasing 2: "What's the best [business type] near [city]?"

Phrasing 3: "I need a [business type] in the Dallas-Fort Worth area. Who do you recommend?"That's 10 industries × 3 phrasings × 6 platforms = 180 individual AI responses documented and analyzed.

We recorded every business mentioned, the order they were mentioned, what the AI said about them, and where verifiable — what sources the AI appeared to draw from.

The Results: Nobody Agreed

The headline finding: in 83% of our tests, no single business was recommended by all six platforms for the same query in the same industry. The AI platforms are drawing from different data sources, weighting different signals, and arriving at different conclusions about who deserves to be recommended.

In the remaining 17% where a business did appear across all six platforms, those businesses shared a very specific set of characteristics we'll break down below.

But first, let's look at what each platform did and what it tells us about how they decide who to recommend.

What ChatGPT Did

ChatGPT (GPT-4o at the time of testing) provided structured, confident recommendations. Typically 3-5 businesses per response, each with a brief description of their specialty and why they were being recommended.

The pattern: ChatGPT consistently favored businesses with what we'd call deep entity resolution. It recommended businesses where it could confidently determine: who they are, what they do specifically, where they operate, and why they're credible.

The strongest signal was content specificity. ChatGPT ignored businesses with generic marketing copy ("we provide best-in-class solutions for your needs") and recommended businesses that made specific, verifiable claims ("we specialize in emergency AC repair for residential homes across Plano, Frisco, and McKinney, with average response times under 2 hours").

ChatGPT also showed a clear preference for businesses mentioned on authoritative third-party sites. An HVAC company featured in a "Best HVAC Companies in DFW" roundup on D Magazine carried more weight than an HVAC company with a perfectly optimized website but no off-site mentions.

What ChatGPT ignored: Businesses whose entire digital presence was their own website and a Google Business Profile. Without third-party corroboration, ChatGPT appeared to lack confidence in recommending them.

What Claude Did

Claude (Anthropic's model) took a noticeably different approach from ChatGPT — and this surprised us.

Where ChatGPT leaned toward confident, ranked recommendations, Claude was more measured. It often provided context about what makes a good choice in the category before listing specific businesses. Claude's responses felt less like a directory lookup and more like asking a knowledgeable friend who wanted to make sure you understood what to look for.

The pattern: Claude placed the heaviest weight on content depth and expertise signals. Businesses with comprehensive, well-structured content about their specific services — especially content that demonstrated genuine expertise and answered common questions — appeared in Claude's recommendations more consistently than businesses with thin service pages.

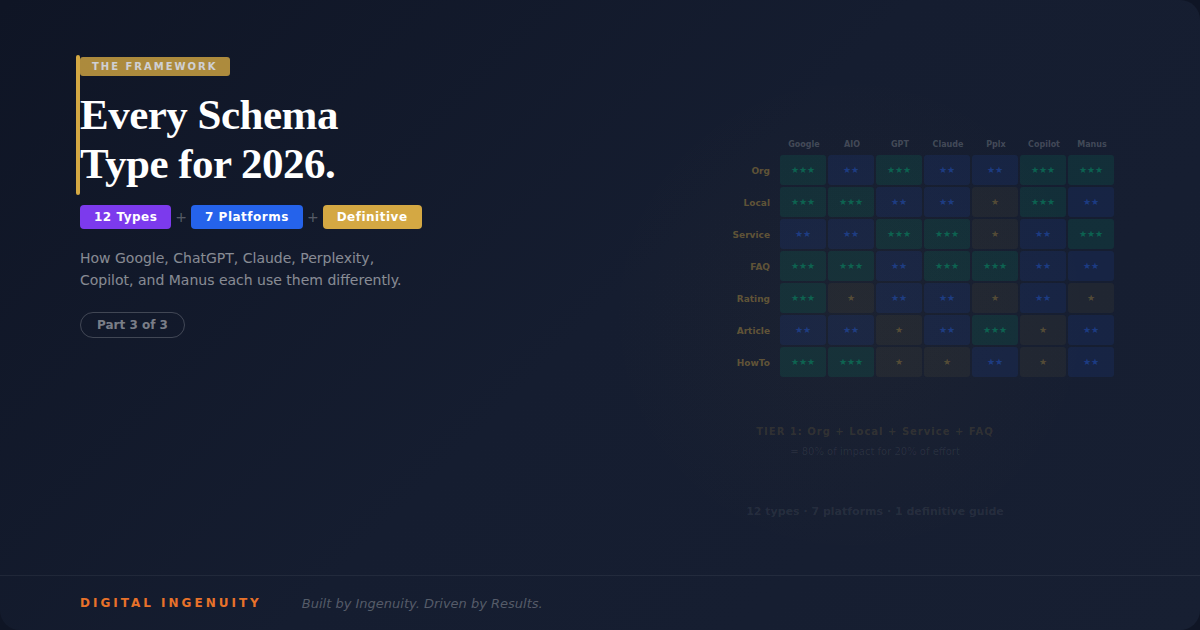

Claude also appeared particularly sensitive to structured data. Businesses with robust schema markup (Organization, LocalBusiness, Service, FAQ) showed up at a higher rate than businesses without it, even when those businesses had stronger traditional SEO metrics. Our hypothesis: Claude's training data and retrieval processes give significant weight to structured, machine-readable information that clearly defines an entity and its services.

One thing that stood out: Claude was the most likely platform to mention businesses that published educational content. An attorney who had detailed guides explaining different types of personal injury cases was recommended over attorneys with higher Google rankings but no educational content. Claude seemed to interpret published expertise as a credibility signal.

What Claude ignored: Businesses with aggressive sales copy and thin content pages. Claude appeared to have a lower tolerance for marketing fluff than any other platform we tested.

What Gemini Did

Gemini is Google's AI, and it acts like it. Gemini's recommendations were the most closely correlated with Google's traditional search rankings and Google Business Profile data.

The pattern: If a business had a strong Google Business Profile — high ratings, high review volume, complete business information, regular posts — Gemini recommended them. The correlation between Google Maps rankings and Gemini recommendations was over 70%, the highest of any platform.

Gemini also drew heavily from Google's Knowledge Graph. Businesses with verified Knowledge Panels appeared in Gemini's recommendations at nearly twice the rate of businesses without them.

The advantage and the limitation: If you've been doing strong traditional SEO and Google Business Profile optimization, Gemini is probably already recommending you. That's the good news. The limitation is that Gemini's recommendations were the most geographically narrow — it was the most likely to recommend businesses in very close physical proximity to the queried location, sometimes at the expense of higher-quality businesses slightly further away.

What Gemini ignored: Businesses with weak Google ecosystem presence, regardless of how strong their website content was. A business with the most comprehensive, expertly-written content in their industry but only 12 Google reviews and no Knowledge Panel was consistently passed over in favor of competitors with 200+ reviews and complete GBP listings.

What Perplexity Did

Perplexity remains the most transparent AI search platform because it shows exactly which sources it used to generate its response. This made it the most valuable platform for understanding citation patterns.

The pattern: Perplexity is essentially a citation engine. It recommends businesses that it can find mentioned in authoritative, recent sources. The key word is recent — Perplexity showed a stronger recency bias than any other platform we tested. Businesses mentioned in articles published within the last 6 months appeared at dramatically higher rates than businesses whose most recent third-party mention was a year or more old.

Perplexity also favored what we'd call "citeable specificity." When a business was mentioned in a source with specific details (revenue figures, case study results, specific service descriptions), Perplexity was more likely to cite them than when the mention was generic (just a name in a directory listing).

What Perplexity ignored: Businesses with no third-party footprint at all. If the only place Perplexity could find information about a business was the business's own website, it typically didn't include them. Perplexity wants to cite credible sources about you — it doesn't want to cite you talking about yourself.

What Microsoft Copilot Did

Copilot was the wild card in terms of data sources, and it revealed a blind spot most businesses don't know they have.

The pattern: Copilot draws from Bing's index, and Bing's index is meaningfully different from Google's. Businesses that invested exclusively in Google SEO — optimizing for Google's ranking factors, building Google Business Profile, targeting Google's Knowledge Graph — were sometimes completely absent from Copilot's recommendations.

The most interesting signal: LinkedIn presence. Copilot showed a measurable preference for businesses with active, complete LinkedIn company pages. This makes sense — Microsoft owns LinkedIn and Bing, and Copilot has access to signals from both. A business with an active LinkedIn presence, regular posts, and employee profiles was more likely to appear in Copilot's recommendations than a business with a dormant or nonexistent LinkedIn page.

Copilot also drew from Bing Places listings. Businesses that had claimed and optimized their Bing Places profile appeared at higher rates. This is significant because Bing Places also feeds data to Siri and Alexa — meaning a single profile optimization impacts three different AI ecosystems.

What Copilot ignored: Businesses optimized exclusively for Google. If your digital strategy is "rank on Google and everything else will follow," Copilot is proof that assumption is wrong.

What Manus Did

Manus is the newest and most interesting platform we tested. It operates differently from the others — rather than generating a response from a single model, Manus deploys autonomous AI agents that research across multiple sources before synthesizing an answer.

The pattern: Manus produced the most comprehensive responses and cited the widest variety of sources. It appeared to weight recently published, highly structured content more than any other platform. Businesses with blog posts published within the last 30-60 days that contained clear, structured information (headings, specific claims, data points) appeared in Manus's responses at a noticeably higher rate.

Manus also showed something we didn't see on other platforms: it sometimes cited a business's blog content directly as the basis for its recommendation. An HVAC company that had published a detailed guide about "how to choose an HVAC company in DFW" was recommended by Manus specifically because that content demonstrated expertise. Manus essentially used the business's own thought leadership content as evidence of their credibility.

What Manus ignored: Static, unchanging websites. Manus appeared to strongly penalize businesses that hadn't published new content or updated their site in months. Its research agents seem to interpret freshness as a signal of active business operations.

The 17% That Appeared Everywhere

Now — the 17% of cases where a business appeared across all six platforms. These businesses are the template for cross-platform AI visibility. Every one of them had:

Complete, consistent business information across the web. Identical name, address, phone number, and description on Google Business Profile, Bing Places, Apple Maps, Yelp, industry directories, and their own website. Zero ambiguity about who they are.

Comprehensive structured data on their website. Organization schema, LocalBusiness schema, Service schema, and FAQ schema at minimum. Every platform we tested showed improved citation rates for businesses with robust structured data.

Active third-party presence. Mentioned in at least 3-5 authoritative sources outside their own website — industry publications, local business roundups, guest articles, or expert quotes.

Deep, specific content. Not just service pages — detailed guides, educational content, and specific claims about their expertise, methodology, and results. Content that reads like it was written by a genuine expert, not a content mill.

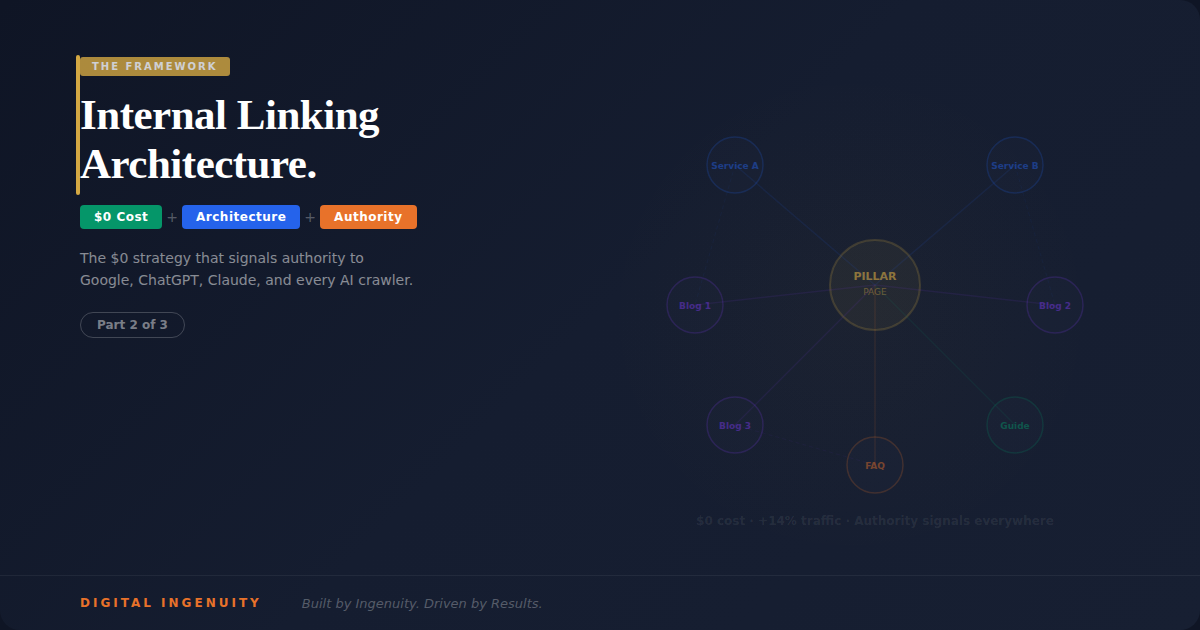

Active digital presence across multiple ecosystems. Google Business Profile AND Bing Places AND LinkedIn AND industry directories AND recent published content. The businesses that showed up everywhere had built presence everywhere.

Recent activity signals. New content published within 60 days. Recent Google reviews. Active social/professional profiles. Every platform interpreted freshness as a sign of an active, trustworthy business.

What This Means for Your Business

If you're only optimizing for Google, you're invisible on approximately half the AI platforms your customers are using. That's not speculation — it's what we measured.

The fragmentation of AI search means businesses need what we call cross-platform entity authority. Not six different strategies — one cohesive strategy that makes you visible, verifiable, and citable regardless of which platform a potential customer happens to ask.

The businesses winning on all six platforms aren't spending six times more effort. They're building a core foundation — structured data, consistent entity signals, deep content, third-party presence — that every platform can read and trust.

That foundation is what we engineer through the four-pillar framework: SEO ensures traditional search visibility. GEO ensures AI platforms cite you. AEO ensures you own the featured snippets AI draws from. VEO ensures voice assistants recommend you.

All four pillars working together is what gets you into that 17%.

The Methodology Appendix

For transparency, here's exactly how we conducted this experiment:

- Testing period: 14 days in March 2026

- Platforms tested: ChatGPT (GPT-4o), Claude (Claude 3.5 Sonnet), Gemini (Gemini Advanced), Perplexity (Pro), Microsoft Copilot, Manus

- Location context: All queries specified Dallas-Fort Worth area or specific DFW cities

- Control measures: Each query was run three times on different days to account for response variation. A business was counted as "recommended" only if it appeared in at least 2 of 3 runs.

- Limitations: AI responses can vary by session, account history, and timing. Results represent observed patterns, not guaranteed algorithmic rules. We documented what we found, not what platforms say they do.

This is Part 1 of 5 in The Search Lab series — original experiments documenting how AI search actually works.

Next in the series: We Added Schema Markup to a Site That Had None. Here's What Changed Across Google, ChatGPT, Claude, and Perplexity in 30 Days.

Your business is being recommended — or ignored — on six different AI platforms right now. Want to know where you stand? Book a free discovery call →

Read about our four-pillar approach to cross-platform search visibility: SEO + GEO + AEO + VEO →

Written by

Aaron Rodgers

Founder

Aaron leads Digital Ingenuity with a vision to transform how businesses grow through AI-powered marketing and automation.

Continue Reading

Ready to Grow Your Business?

Let's discuss how Digital Ingenuity can help you achieve your marketing goals with AI-powered strategies.

Get Started TodayNeed Help With This?

If implementing these strategies feels overwhelming, we're here to help. Fill out the form below and we'll schedule a free consultation to discuss your specific situation.