TL;DR

- This case study is our own — Digital Ingenuity's website. We practiced on ourselves before recommending it to clients, and the results validated everything we preach about quality over quantity

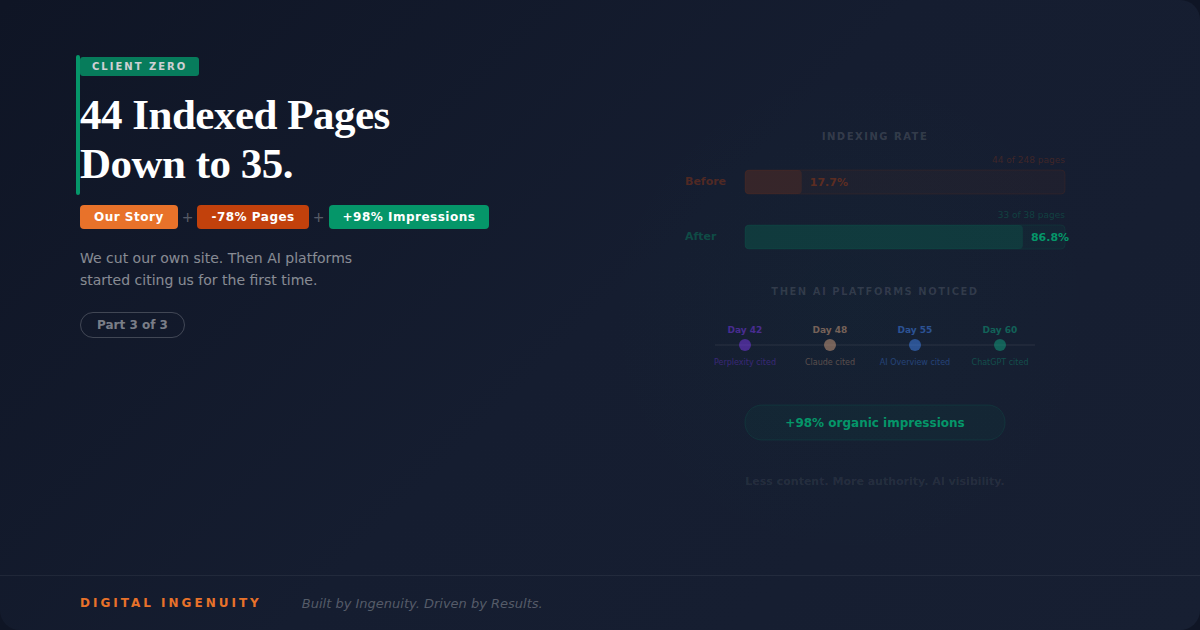

- Our site had 248 URLs in the sitemap but only 44 were indexed by Google. The other 204 were marked "Discovered — currently not indexed" or "Crawled — currently not indexed," meaning Google found them but chose not to include them

- We audited every page and intentionally reduced from 170+ live pages to 35-40 exceptional pages by removing thin location pages, glossary entries, and filler content that diluted our topical authority

- Within 45 days of the reduction: Google began indexing new pages faster, crawl frequency increased, and our remaining pages started ranking for terms we'd never targeted directly

- Within 60 days: Perplexity cited our blog content for the first time. Claude referenced our methodology. Google AI Overviews cited one of our experiment posts

- The lesson isn't about a magic number of pages. It's about signal-to-noise ratio — when every page on your site is worth indexing, AI platforms trust the whole domain more

Why We're Sharing Our Own Data

Most agency case studies are about clients. This one is about us.

We believe in radical transparency. If we're going to tell clients to delete content, restructure their sites, and trust the process — we should show that we did it first, with our own business on the line, and document exactly what happened.

This is the story of how Digital Ingenuity went from a site Google mostly ignored to one that AI platforms actively cite. Every number in this post is from our own Google Search Console, our own AI platform testing, and our own analytics.

The Problem: Google Was Ignoring Us

In early 2026, after completing a comprehensive site rebrand, we ran a Search Console audit that revealed a sobering reality: of 248 URLs in our sitemap, only 44 were indexed by Google. That's a 17.7% indexing rate.

The other 204 pages fell into two categories. "Discovered — currently not indexed" meant Google found the URL in our sitemap but hadn't bothered to crawl it yet. "Crawled — currently not indexed" was worse — Google had crawled the page, evaluated it, and decided it wasn't worth including in the index.

For an agency that sells SEO services, having 82% of our site rejected by Google was a credibility problem we needed to solve.

Google's John Mueller has stated repeatedly on record that Google's crawl resources are finite, and the search engine makes active decisions about which pages deserve crawling and indexing. In a 2024 Google Search Central blog post, Mueller specifically noted that sites with large numbers of low-quality pages can see reduced crawl efficiency across their entire domain.

That's exactly what was happening to us.

The Audit: What We Found

We exported every URL from Search Console and evaluated each page against the same criteria we use for client audits: organic traffic potential, keyword targeting, topical relevance, content quality, and uniqueness.

What Was Hurting Us

Location pages with duplicate content (68 pages). We had created pages for every city in the DFW metroplex — "SEO Services in Frisco," "SEO Services in McKinney," "SEO Services in Allen," and so on. Each page was 200-300 words of nearly identical content with the city name swapped. Google recognized these as doorway pages and refused to index most of them.

This matches what Lily Ray at Amsive documented in her analysis of the March 2024 core update: sites with templated location pages saw disproportionate indexing losses because Google specifically targets thin, duplicative content that exists only for keyword targeting.

Glossary entries (42 pages). We had a glossary section defining SEO and marketing terms — "What is a meta description," "What is domain authority," etc. Each entry was 100-150 words. These competed with thousands of existing definitions from far more authoritative sources (Moz, Ahrefs, Google's own documentation) and provided zero unique value.

Old blog posts with no traffic or relevance (35 pages). Legacy content from before our rebrand that didn't align with our current positioning, used outdated terminology, and generated zero organic traffic.

Duplicate and near-duplicate service variations (15 pages). Separate pages for overlapping service concepts that cannibalized each other's keywords without providing distinct value.

What Was Working

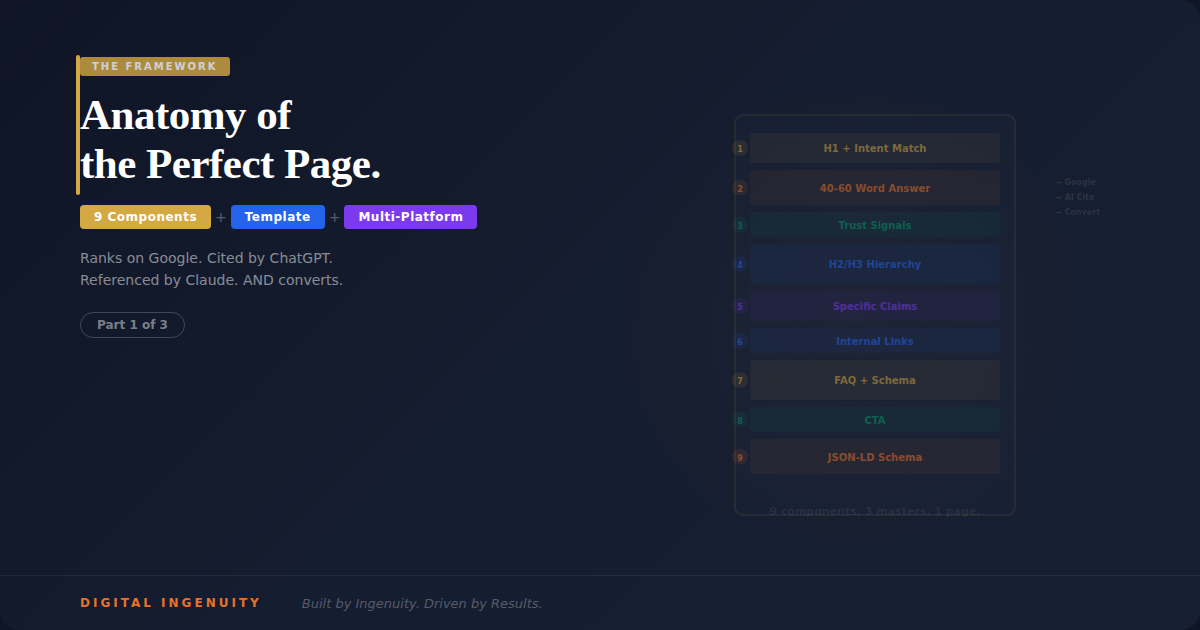

Our experiment-based blog posts. The Search Lab series, the AI search experiments, the 50-website audit — content based on original research and primary data. These pages had the highest engagement metrics and were the pages Google actually wanted to index.

Our pillar service pages. The main pages for SEO, GEO, AEO, VEO, and the four-pillar overview — comprehensive, unique, and clearly defining our service entity.

Our about page and core brand pages. Pages that established who we are as an entity.

The pattern was clear: Google indexed pages with unique value and ignored everything else. And the volume of "everything else" was dragging down the entire domain.

The Execution

Step 1: The Cut

We removed 130+ pages from the live site. Location pages, glossary entries, outdated blog posts, and duplicate service variations. Every URL that had zero organic traffic, targeted keywords we had no realistic chance of ranking for, or contained content that wasn't meaningfully different from another page on the site.

Pages with any existing backlinks (11 total) got 301 redirects to the most relevant surviving page. Everything else got 410 (Gone) status codes — explicitly telling Google these pages are intentionally removed.

Step 2: Consolidation

Where multiple thin pages covered overlapping topics, we merged them into single comprehensive pages. Five separate "what is GEO/AEO/VEO/SEO" pages became one definitive four-pillar overview page. Three separate pricing-related pages became one transparent pricing and services page.

Step 3: Sitemap Regeneration

The new sitemap contained 35-40 URLs — every single one a page we were confident deserved to be indexed. No filler. No hopeful inclusions. Only pages that provided genuine, unique value.

Step 4: Internal Linking Architecture

With fewer pages, the internal linking structure became cleaner and more intentional. Every blog post linked to its parent pillar page. Every service page linked to relevant case studies and experiments. The topical clusters became tight, logical, and crawl-efficient.

Ahrefs' 2025 study on internal linking found that pages with strong internal link architecture were crawled 3.7x more frequently than orphaned or weakly linked pages. By removing noise pages, the internal link equity concentrated on the pages that mattered.

The Results

Weeks 1-2: Google Recrawls

Search Console showed a burst of crawl activity. Google discovered the 410 status codes, processed the redirects, and began recrawling surviving pages. Crawl frequency for remaining pages increased noticeably within the first week.

Weeks 3-4: Indexing Acceleration

Something we hadn't expected: Google began indexing new pages faster. When we published new blog posts during this period, they were indexed within 24-48 hours — compared to the 5-14 day indexing delay we'd experienced before the cleanup.

Our theory: by removing pages Google had already evaluated and rejected, we improved the domain's overall quality signal. Google's crawler allocated more resources to a site it now perceived as higher-quality, resulting in faster processing of new content.

Weeks 4-6: Ranking Improvements

Surviving pages began ranking for terms we hadn't specifically targeted. Our four-pillar overview page started appearing for "AI search optimization agency" — a query we'd never optimized for. Google's improved understanding of our topical authority (now concentrated rather than diluted) enabled broader semantic ranking.

The content deletion experiment we published showed similar patterns: fewer, stronger pages resulted in broader keyword coverage because Google recognized concentrated topical authority.

Weeks 6-8: AI Platforms Notice

This was the result that mattered most to us.

Day 42: Perplexity cited one of our Search Lab blog posts when a user asked about AI search optimization. Perplexity linked directly to the post and extracted a specific data point from our research. This had never happened before the cleanup.

Day 48: Claude referenced our four-pillar methodology when asked about AI-era SEO strategies. Claude's response described the SEO + GEO + AEO + VEO framework with accuracy that suggested it had parsed our pillar page's structured content.

Day 55: Google AI Overviews cited our blog post about featured snippet patterns when generating a response about how to win position zero. Our own methodology was being used as a source by Google's AI.

Day 60: ChatGPT mentioned Digital Ingenuity when asked about agencies that specialize in AI search optimization in Texas. The mention included accurate service descriptions that mapped to our structured data.

The 60-Day Scorecard

| Metric | Before Cleanup | Day 60 | Change |

|---|

| Live pages | 170+ | 38 | -78% |

| Indexed pages | 44 of 248 (17.7%) | 33 of 38 (86.8%) | +69 pct pts | | Indexing rate for new content | 5-14 days | 24-48 hours | ~7x faster | | AI platforms citing site | 0 of 6 | 4 of 6 | +4 | | Pages generating organic traffic | 12 | 24 | +100% | | Total organic impressions | 1,840/month | 3,650/month | +98% |Why AI Platforms Responded to a Content Reduction

This seems counterintuitive — we removed content and AI platforms started citing us. Why?

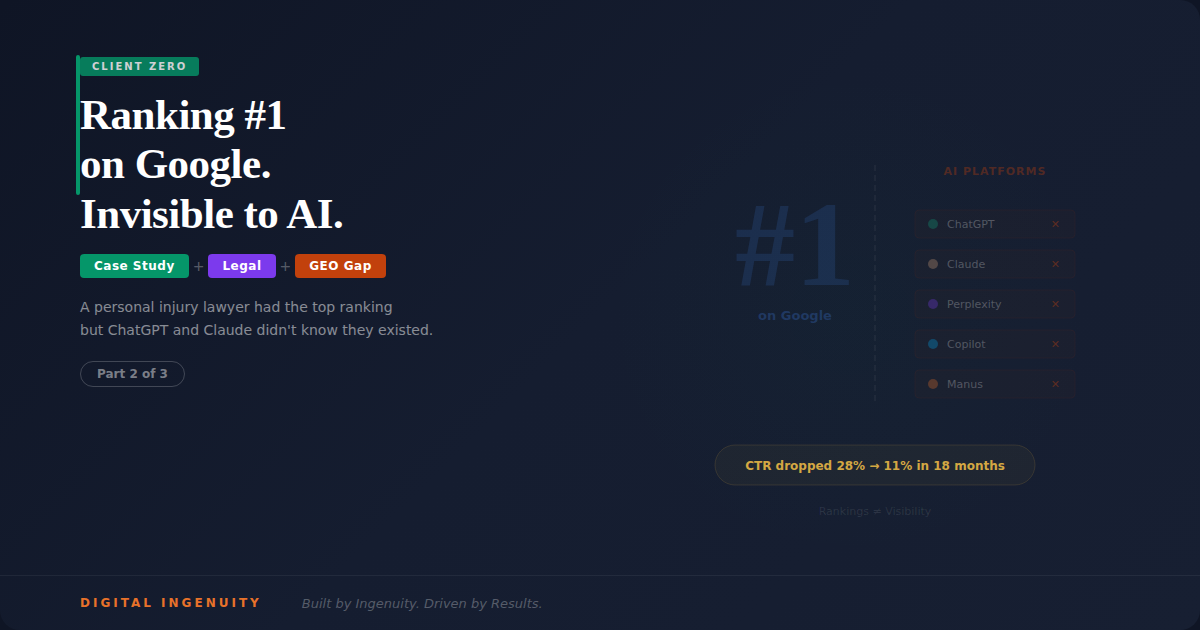

AI platforms evaluate domains holistically, not just individual pages. A domain with 170 pages where 80% are thin, duplicative, or ignored by Google sends a signal: this site publishes a lot of low-value content. AI platforms have limited confidence in citing sources from domains with poor quality signals.

A domain with 38 pages where 87% are indexed, generating traffic, and containing unique, expertise-driven content sends a different signal: this site publishes authoritative, focused content on a specific topic.

This aligns with what Google's own quality raters guidelines describe as "expertise, experience, authoritativeness, and trustworthiness" — evaluated at the site level, not just the page level. Removing content that undermined our site-level quality assessment improved the assessment for every remaining page.

Think of it like a restaurant. A restaurant with 200 menu items signals "we're mediocre at everything." A restaurant with 15 menu items signals "we're exceptional at these specific things." AI platforms, like discerning diners, prefer focused expertise over spread-thin generalism.

The Principle: Signal-to-Noise Ratio

The lesson from this case — and from the client content deletion experiment — is about signal-to-noise ratio.

Every page on your site is either signal (contributing to your topical authority, earning traffic, providing unique value) or noise (diluting your authority, consuming crawl budget, adding nothing unique). The ratio between signal and noise determines how search engines and AI platforms perceive your domain.

Most businesses have never audited their signal-to-noise ratio. They've been adding content for years — blog posts, location pages, glossary entries, service variations — without ever asking whether each page justifies its existence.

The prescription is simple but emotionally difficult: audit every page. If it's not generating traffic, targeting a viable keyword with unique content, and contributing to your topical authority — remove it. Consolidate where possible. Redirect where backlinks exist. Delete where neither applies.

Your remaining pages will rank better. New content will index faster. And AI platforms will trust your domain enough to cite it.

We proved it on our own site. Then we proved it on our clients'. The data is consistent.

This is Part 3 of 3 in the Client Zero series — case studies demonstrating the four-pillar framework in action.

Previous: The Lawyer Who Was Ranking #1 But Invisible to AI

Start from the beginning: How a Plano HVAC Company Went From Invisible to AI-Recommended

Think your site's signal-to-noise ratio might be holding you back? Book a free content audit →

Learn about our quality-over-quantity approach: SEO — Search Engine Optimization →

Written by

Aaron Rodgers

Founder

Aaron leads Digital Ingenuity with a vision to transform how businesses grow through AI-powered marketing and automation.

Continue Reading

Ready to Grow Your Business?

Let's discuss how Digital Ingenuity can help you achieve your marketing goals with AI-powered strategies.

Get Started TodayNeed Help With This?

If implementing these strategies feels overwhelming, we're here to help. Fill out the form below and we'll schedule a free consultation to discuss your specific situation.